Big Brother gets more headwinds

Face Recognition and Data Protection

By Dr. Thomas Fischl

Related providers

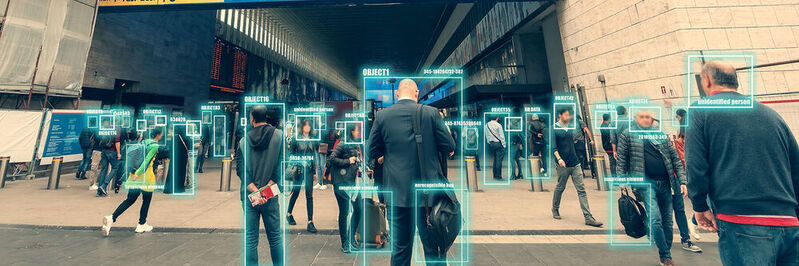

Content uploaded by users themselves, which is publicly accessible on the Internet, is not “data protection-free” data. Just because data is publicly accessible, it is not available for free use. The company Clearview AI has now also had to acknowledge this in the USA.

(Picture: DedMityay – stock.adobe.com )

Clearview AI, based in the USA, provides a highly qualified search service. The company extracts publicly available personal data such as images and videos from social networks, news portals, search websites and other open sources and feeds them into its database. Using AI (Artificial intelligence), profiles are created on the basis of biometric data, which are based on the publicly accessible video and image material and enriched with additional information such as geo-data.

The company uses AI-supported biometric facial recognition software and creates a digital representation of facial features. With the added geo-data, the search engine can find a person by his photo in the huge database. According to Clearview AI, the database amounts to over 10 billion files.

In a legal dispute with Clearview AI, the US civil rights organization ACLU (American Civil Liberties Union) has now achieved a success. In 2020, the ACLU filed a lawsuit accusing Clearview AI of violating Illinois laws that prohibit private companies from collecting or using biometric data without people’s consent. Under the pressure of the proceedings, Clearview AI has now agreed to a settlement stating that Clearview AI may no longer make its facial database accessible to most private institutions and other private institutions in the United States, neither for free nor for money.

The agreement is effective beyond Illinois for the entire USA. However, state organizations are still allowed to use Clearviews app – except in Illinois. There is a ban on the sale of the app to law enforcement and police authorities for a period of five years.

What is the significance of the decision beyond the United States?

The decision is in line with a number of regulatory and judicial proceedings that have already been initiated in the past against the economic exploitation of biometric facial recognition methods worldwide. There are efforts not only in Europe to show such business models their limitations, but also in Canada or Australia, for example, where the data protection authorities had asked Clearview AI to delete all data and to cease its activities.

What is the legal situation in Germany and Europe?

The data protection supervisory authorities in Europe take a largely uniform view. Clearview AI cannot rely on a legal basis for web scraping or for further data processing. Facebook Instagram, LinkedIn or other social networks, for example, have not been asked for consent to data processing by Clearview AI before accessing their data, so the legal basis may be the so-called “legitimate interest” in accordance with Art. 6 para. 1 P. 1 lit. f GDPR is considered.

No legitimate interest in terms of data protection of Clearview AI

As a legitimate interest, Clearview AI cites, among other things, the interest of effective law enforcement. However, it may be doubted whether the simpler prosecution for altruistic motives is actually the original interest of Clearview AI. Accordingly, the supervisory authorities also come to the conclusion that in any case no overriding legitimate interest is apparent.

Violation of other principles of data protection law

The data protection authorities also assume that the absence of a legal basis constitutes a violation of the principle of legality under Art. 5. The failure to inform the data subjects that their data has been processed by Clearview AI is also a violation of transparency obligations. In addition, Clearview AI violates the purpose limitation principle because the company processed, used and stored data for purposes other than the original purposes. Users of social networks who upload images or videos did not reasonably expect their data to be stored for the purpose of law enforcement and prevention.

Actions of the data protection authorities

After the canadian, Aussie and French In March 2022, the data protection authority Clearview AI had requested the deletion of all data and the cessation of its activities, the serves Italian The Data protection Authority has imposed a fine of EUR 20 million on Clearview AI. Another similar decision is expected for Austria.

Is there any protection against facial recognition software?

There is probably only one safe way to protect yourself from facial recognition in social networks: you do not participate in social networks or do not share photos there. For many, this will not be a real option.

Perhaps filters can also help against surveillance. There are some software tools on the market that promise to give users back a bit of control. The programs provide the photos with background artifacts that mislead AI models for image recognition.

View

It is to be expected that clearer legal regulations will take effect in the longer term. Until then, however, companies will continue to use the grey areas. At the EU level, there seems to be a certain skepticism towards automated facial recognition. The current draft of a new EU AI regulation can be interpreted in this way. It provides for a ban on the use of real-time biometric identification in publicly accessible premises for law enforcement purposes. However, a number of exceptions are provided for in it, which will certainly be discussed intensively further.

About the author: Dr. Thomas Fischl is a lawyer and partner of the global business and law firm Reed Smith and a member of the Entertainment & Media Group. As a recognized expert in the field of information technology law, he advises German and international companies on all legal issues related to digitization, digital media, IoT, virtual reality, social media and the data economy. He regularly deals with topics such as data protection, cybersecurity, risk management and compliance.

(ID:48397309)